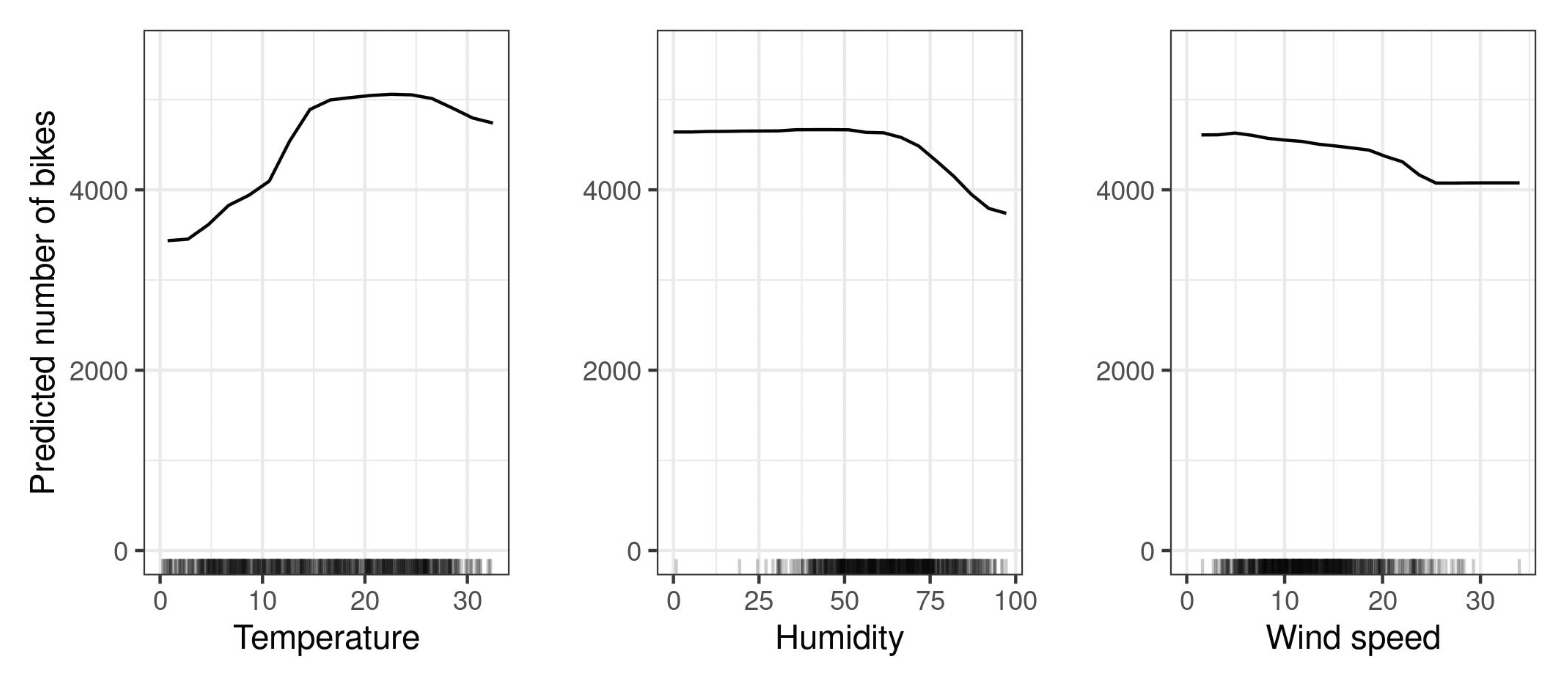

Partial Dependence Plot

Definition

The marginal effect one or two features have on the predicted outcome of a ML model.

A partial dependence plot can show whether the relationship between the target and a feature is linear, monotonic or more complex. For example, when applied to a linear regression model, partial dependence plots always show a linear relationship.

is a subset of features (one or two) that are independent with the others. are all other features that are not in . We assume that all features in are uncorrelated with the ones in .

The partial dependence is We can estimate this integral using the Monte-Carlo for Integrals method. We then get the simple average over all data points where we fix the values in to the value from .

PDP-based Feature Importance

We can use the flatness of the PDP to determine the Feature Importance.

- flat → low importance

- high Variance → high importance

Examples

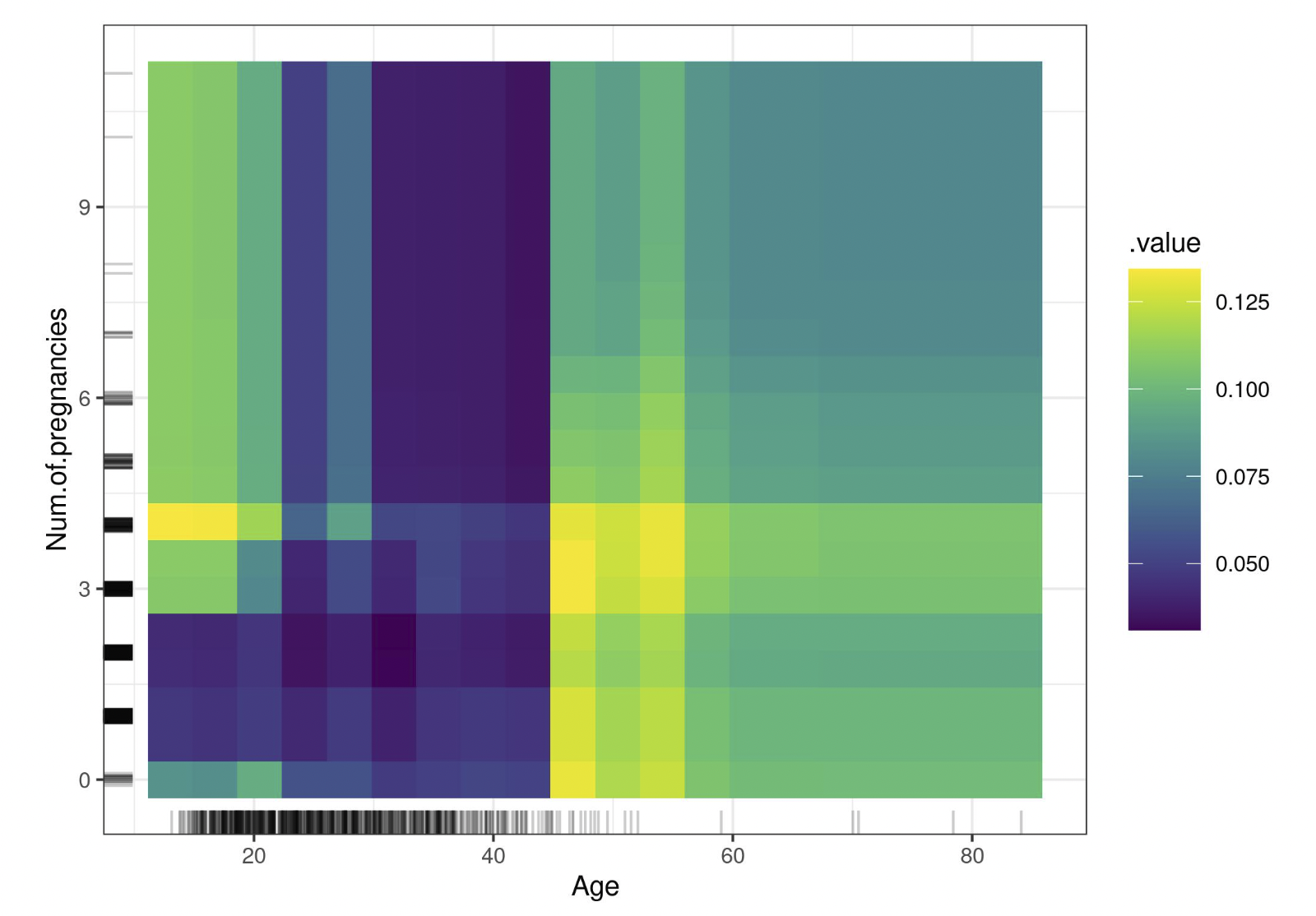

For more than one feature you can use a Heatmap:

Pros

- intuitive

- clear interpretation

- easy to implement

- “causal” interpretation (at least in the model context, not in the real world though)

Cons

- maximum of two features

- assumption of independence with other features

- else there might be data points that are extremely unlikely (see rug plot)

- → use Accumulated Local Effect Plot

- heterogeneous effects might be hidden

- dont show feature distribution

- → use rug plot (see example)

In sklearn

Implemented + you can use PDPBox